Introduction

In Part 3 of this series, we identified the exact bottlenecks killing our WooCommerce site’s performance:

- WC Global Product Order plugin – 4x meta JOIN adding 4.82 seconds per query

- 236 duplicate queries – Wasting 43% of database time

- Missing database indexes – Queries running 10-100x slower than necessary

- WPML overhead – Adding 225 queries per page load

- Database connection exhaustion – Only 150 max connections

With the problems identified, we could finally start fixing them.

In this post, we’ll cover:

- 7 specific optimisations we implemented

- Exact code and SQL for each fix

- Performance impact of each optimisation (measured with K6)

- Before/after metrics for every change

- Total improvement: 20+ seconds down to 3.6 seconds

This is the complete optimisation playbook.

Our Optimisation Strategy

Before diving in, we established a systematic approach:

The Rules

1. Measure Everything

Run K6 load test before and after each change to measure impact.

2. One Change at a Time

Never implement multiple optimisations simultaneously – you won’t know which one worked.

3. Biggest Impact First

Prioritise fixes by expected impact:

- High impact, low effort → Do first

- High impact, high effort → Do second

- Low impact, low effort → Do third

- Low impact, high effort → Skip or save for later

4. Document Everything

Record baseline metrics, changes made, and results for every optimisation.

5. Have a Rollback Plan

Take database backups before adding indexes. Keep deactivated plugins in case we need to revert.

Optimisation #1: Remove WC Global Product Order Plugin

Impact Prediction: High (plugin caused 75% of slowness)

Effort: Low (just deactivate)

Risk: Medium (might affect product display order)

The Problem

The WC Global Product Order plugin was adding 4x postmeta JOINs to every product query, turning a simple query into a 4.82-second nightmare.

The Fix

Step 1: Check if it’s actually being used

[SQL]

-- Check how many products have custom ordering

SELECT COUNT(*) as products_with_custom_order

FROM wp_postmeta

WHERE meta_key = '_wc_gpo_custom_order';

Result: 847 products out of 1,000

The client WAS using custom ordering, but we had an alternative solution.

Step 2: Export custom order data

Before removing the plugin, we exported the custom order:

[SQL]

-- Export product order for backup

SELECT post_id, meta_value as custom_order

FROM wp_postmeta

WHERE meta_key = '_wc_gpo_custom_order'

ORDER BY CAST(meta_value AS SIGNED) DESC

INTO OUTFILE '/tmp/product-custom-order-backup.csv'

FIELDS TERMINATED BY ','

ENCLOSED BY '"'

LINES TERMINATED BY '\n';

Step 3: Deactivate the plugin

[BASH]

wp plugin deactivate woocommerce-global-product-order

Step 4: Set default ordering

We configured WooCommerce to use default ordering by popularity (which uses a built-in meta field that’s already indexed):

[PHP]

// Add to functions.php

add_filter('woocommerce_default_catalog_orderby', function() {

return 'popularity'; // Uses total_sales, which is indexed

});

Before:

http_req_duration: avg=20.3s p(95)=30s

Total queries: 487

Slowest query: 4.82s

After:

http_req_duration: avg=8.1s p(95)=15.2s

Total queries: 312

Slowest query: 1.91s

Impact:

This single change gave us 60% of our performance back!

Optimisation #2: Add Critical Database Indexes

Impact Prediction: High (missing indexes = full table scans)

Effort: Low (just SQL commands)

Risk: Low (indexes only improve reads, don’t affect data)

The Problem

Query Monitor showed multiple queries doing full table scans on tables with millions of rows.

The Fix

We added indexes strategically based on Query Monitor’s analysis.

Step 1: Backup the database

[BASH]

wp db export backup-before-indexes.sqlStep 2: Add postmeta indexes

[SQL]

-- Index on meta_key (most commonly filtered column)

ALTER TABLE wp_postmeta

ADD INDEX idx_meta_key (meta_key(191));

-- Composite index on post_id + meta_key (common query pattern)

ALTER TABLE wp_postmeta

ADD INDEX idx_post_meta_key (post_id, meta_key(191));

-- Index for meta_value sorting (prices, dates)

ALTER TABLE wp_postmeta

ADD INDEX idx_meta_key_value (meta_key(191), meta_value(50));

Step 3: Add term relationship indexes

[SQL]

-- For category/filter queries

ALTER TABLE wp_term_relationships

ADD INDEX idx_object_taxonomy (object_id, term_taxonomy_id);

-- For taxonomy lookups

ALTER TABLE wp_term_relationships

ADD INDEX idx_term_object (term_taxonomy_id, object_id);Step 4: Add WPML translation indexes

[SQL]

-- Index on element_id (product ID)

ALTER TABLE wp_icl_translations

ADD INDEX idx_element_id (element_id);

-- Composite index for translation lookups

ALTER TABLE wp_icl_translations

ADD INDEX idx_element_lang (element_id, element_type(50), language_code(10));

-- Index on translation group

ALTER TABLE wp_icl_translations

ADD INDEX idx_trid_lang (trid, language_code(10));Step 5: Add WooCommerce product lookup indexes

[SQL]

-- Product ID index

ALTER TABLE wp_wc_product_meta_lookup

ADD INDEX idx_product_id (product_id);

-- Stock and sale status (for filtering)

ALTER TABLE wp_wc_product_meta_lookup

ADD INDEX idx_stock_onsale (stock_status(20), onsale);

-- Combined product + stock

ALTER TABLE wp_wc_product_meta_lookup

ADD INDEX idx_product_stock (product_id, stock_status(20));Step 6: Verify indexes were created

[SQL]

-- Check postmeta indexes

SHOW INDEX FROM wp_postmeta;

-- Check translation indexes

SHOW INDEX FROM wp_icl_translations;

-- Check WooCommerce indexes

SHOW INDEX FROM wp_wc_product_meta_lookup;

The Results

Before indexes:

http_req_duration: avg=8.1s p(95)=15.2s

Query time: 6.8s total

Slowest query: 1.91s

After indexes:

http_req_duration: avg=4.3s p(95)=8.9s

Query time: 2.1s total

Slowest query: 0.52s

Impact:

- ✅ 47% reduction in average response time (8.1s → 4.3s)

- ✅ 41% reduction in p95 response time (15.2s → 8.9s)

- ✅ 69% reduction in total query time (6.8s → 2.1s)

- ✅ 73% reduction in slowest query (1.91s → 0.52s)

Indexes made queries 3-5x faster!

Optimisation #3: Install and Configure Redis Object Cache

Impact Prediction: High (eliminates duplicate queries)

Effort: Medium (requires Redis installation + config)

Risk: Low (can disable if issues occur)

The Problem

236 duplicate queries per page load were wasting 43% of database time. Object caching stores query results in memory so they don’t need to be re-executed.

The Fix

Step 1: Install the Redis server

[BASH]

# Install Redis

sudo apt-get update

sudo apt-get install redis-server

# Start Redis

sudo systemctl start redis-server

sudo systemctl enable redis-server

# Verify it's running

redis-cli ping

# Should return: PONG

[BASH]

wp plugin install redis-cache --activate

Add to wp-config.php before “That’s all, stop editing!”:

[PHP]

// Redis Configuration

define('WP_REDIS_HOST', '127.0.0.1');

define('WP_REDIS_PORT', 6379);

define('WP_REDIS_TIMEOUT', 1);

define('WP_REDIS_READ_TIMEOUT', 1);

define('WP_REDIS_DATABASE', 0);

// Optional: Prefix for this site

define('WP_REDIS_PREFIX', 'adnart_');

// Optional: Enable compression

define('WP_REDIS_COMPRESSION', true);

// Increase max TTL

define('WP_REDIS_MAXTTL', 86400); // 24 hours

[BASH]

wp redis enable

# Verify it's working

wp redis status

[PHP]

// Add to functions.php

add_action('init', function() {

// Add global cache groups (shared across all sites if multisite)

if (function_exists('wp_cache_add_global_groups')) {

wp_cache_add_global_groups([

'users',

'usermeta',

'userlogins',

'userslugs',

'site-options',

'site-lookup',

'blog-lookup',

'blog-details',

'site-details',

'networks',

'sites',

'icl_strings', // WPML strings

'woocommerce-attributes', // WooCommerce

]);

}

});

[BASH]

# Check Redis keys

redis-cli KEYS "*"

# Check cache stats

wp redis info

The Results

Before Redis:

http_req_duration: avg=4.3s p(95)=8.9s

Total queries: 312

Duplicate queries: 236

After Redis:

http_req_duration: avg=3.6s p(95)=6.64s

Total queries: 187 (first load), 76 (cached)

Duplicate queries: 42

Impact:

- ✅ 16% reduction in average response time (4.3s → 3.6s)

- ✅ 25% reduction in p95 response time (8.9s → 6.64s)

- ✅ 75% reduction in queries (312 → 76 on cached loads)

- ✅ 82% reduction in duplicates (236 → 42)

Redis eliminated most duplicate queries!

Optimisation #4: Create Custom Performance Plugin

Impact Prediction: Medium (targets remaining duplicates)

Effort: Medium (requires custom code)

Risk: Low (can deactivate if issues)

The Problem

Even with Redis, we still had 42 duplicate queries:

- 18 usermeta queries

- 12 WPML string translations

- 8 WooCommerce product meta queries

- 4 unnecessary cart initialisation queries

The Fix

We created a custom plugin to address each issue:

Create plugin file: wp-content/plugins/adnart-performance-fixes/adnart-performance-fixes.php

[PHP]

<?php

/**

* Plugin Name: Adnart Performance Fixes

* Description: Eliminates duplicate queries and optimizes performance

* Version: 1.0

* Author: Annexal

*/

// Prevent direct access

if (!defined('ABSPATH')) {

exit;

}

/**

* Fix #1: Batch load user meta (eliminates 18 duplicate queries)

*/

add_action('wp', function() {

if (is_user_logged_in()) {

$user_id = get_current_user_id();

// Prime the cache with all user meta at once

update_meta_cache('user', [$user_id]);

// Also prime WooCommerce customer data

if (class_exists('WC_Customer')) {

$customer = new WC_Customer($user_id);

// This loads and caches all customer data

}

}

}, 1);

/**

* Fix #2: Disable WCML geolocation queries

* (Not needed for this site, adds 8 unnecessary queries)

*/

add_filter('wcml_geolocation_is_enabled', '__return_false');

/**

* Fix #3: Cache WPML string translations

* (Eliminates 12 duplicate translation queries)

*/

add_filter('icl_st_load_translations', function($translations) {

static $cache = [];

if (!empty($cache)) {

return $cache;

}

$cache = $translations;

return $translations;

});

/**

* Fix #4: Disable SQL_CALC_FOUND_ROWS

* (Major performance win - prevents full table scans)

*/

add_filter('found_posts_query', '__return_empty_string');

add_filter('posts_clauses', function($clauses) {

if (!is_admin()) {

$clauses['fields'] = str_replace('SQL_CALC_FOUND_ROWS', '', $clauses['fields']);

}

return $clauses;

}, 10);

/**

* Fix #5: Optimize WooCommerce cart initialization

* (Only load cart on pages that actually need it)

*/

add_action('init', function() {

if (!is_admin() && !is_cart() && !is_checkout() && !is_shop() && !is_product()) {

// Disable cart processing on non-shop pages

remove_action('wp_loaded', ['WC_Form_Handler', 'process_login'], 20);

remove_action('wp_loaded', ['WC_Form_Handler', 'process_registration'], 20);

remove_action('wp_loaded', ['WC_Form_Handler', 'add_to_cart_action'], 20);

}

}, 5);

/**

* Fix #6: Disable unnecessary WooCommerce patterns

*/

add_filter('woocommerce_register_wp_patterns', '__return_false');

/**

* Fix #7: Batch load product meta

* (Reduces multiple individual meta queries to one batch query)

*/

add_action('woocommerce_product_query', function($query) {

add_action('the_post', function($post) {

// Prime product meta cache

update_meta_cache('post', [$post->ID]);

});

}, 10);

/**

* Fix #8: Add Redis global groups

*/

add_action('init', function() {

if (function_exists('wp_cache_add_global_groups')) {

wp_cache_add_global_groups([

'icl_strings',

'usermeta',

'wc_session_id',

]);

}

});

Activate the plugin:

[BASH]

wp plugin activate adnart-performance-fixes

The Results

Before custom plugin:

http_req_duration: avg=3.6s p(95)=6.64s

Total queries: 187 (first), 76 (cached)

Duplicate queries: 42

After custom plugin:

http_req_duration: avg=3.6s p(95)=6.64s

Total queries: 151 (first), 58 (cached)

Duplicate queries: 8

Impact:

- ✅ Response time maintained (already optimised)

- ✅ 24% reduction in queries (76 → 58 on cached loads)

- ✅ 81% reduction in duplicates (42 → 8)

The custom plugin eliminated almost all remaining duplicates!

Optimisation #5: Optimise WPML Configuration

Impact Prediction: Medium (reduces WPML overhead)

Effort: Low (just config changes)

Risk: Low (can revert easily)

The Problem

WPML was adding 225 queries per page load with unnecessary features enabled.

Step 1: Disable unnecessary WPML features

Add to wp-config.php:

[PHP]

// WPML Performance Optimisations

// Disable WPML API support (not using it)

define('WPML_LOAD_API_SUPPORT', false);

// Disable Translation Management (not using it)

define('WPML_LOAD_TM_SUPPORT', false);

// Disable unnecessary CSS/JS loading

define('ICL_DONT_LOAD_NAVIGATION_CSS', true);

define('ICL_DONT_LOAD_LANGUAGE_SELECTOR_CSS', true);

define('ICL_DONT_LOAD_LANGUAGES_JS', true);

Step 2: Configure WPML settings

In WordPress admin:

- WPML → Settings → Performance

- ✅ Enable “Improve performance of page builders”

- ✅ Enable “Cache translation files”

- ✅ Enable “Optimize string translation queries”

Step 3: Regenerate WPML config

[BASH]

wp wpml config refresh

Before WPML optimisation:

http_req_duration: avg=3.6s p(95)=6.64s

WPML queries: 148

After WPML optimisation:

http_req_duration: avg=3.6s p(95)=6.64s

WPML queries: 89

Impact:

- ✅ Response time maintained

- ✅ 40% reduction in WPML queries (148 → 89)

Optimisation #6: Stop Unused PHP-FPM Services

Impact Prediction: Low (RAM optimisation, not speed)

Effort: Low (just system commands)

Risk: Low (can restart if needed)

The Problem

Server was running 11 different PHP-FPM versions simultaneously, wasting 500MB+ RAM.

Step 1: Check running PHP-FPM processes

[BASH]

ps aux | grep php-fpm

Result: PHP 7.1, 7.2, 7.3, 7.4, 8.0, 8.1, 8.2, 8.3, 8.4, 8.5 all running!

[BASH]

php -v

# Output: PHP 8.3.x

[BASH]

# Stop all unused PHP-FPM versions

for v in 7.1 7.2 7.3 7.4 8.0 8.1 8.2 8.4 8.5; do

sudo systemctl stop php${v}-fpm

sudo systemctl disable php${v}-fpm

echo "Stopped and disabled PHP ${v}"

done

# Keep only PHP 8.3 running

sudo systemctl status php8.3-fpm

[BASH]

free -h

The Results

Before:

[BASH]

Memory usage: 58% (9.3GB / 16GB)

Active PHP processes: 87

After:

[BASH]

Memory usage: 52% (8.3GB / 16GB)

Active PHP processes: 12

Impact:

- ✅ Freed 1GB RAM

- ✅ Reduced PHP process overhead by 86%

Optimisation #7: MySQL Configuration Tuning

Impact Prediction: Low (fine-tuning)

Effort: Low (config changes)

Risk: Low (can revert)

The Problem

MySQL was using a default configuration not optimised for the WooCommerce workload.

Edit MySQL config:

[BASH]

sudo nano /etc/mysql/mysql.conf.d/mysqld.cnf

[mysqld]:

[INI]

[mysqld]

# Query cache (stores frequent query results)

query_cache_type = 1

query_cache_size = 64M

query_cache_limit = 2M

# InnoDB buffer pool (in-memory data cache)

innodb_buffer_pool_size = 2G

innodb_log_file_size = 256M

innodb_flush_log_at_trx_commit = 2

innodb_flush_method = O_DIRECT

# Connection settings

max_connections = 300 # Increased from 150

thread_cache_size = 50

table_open_cache = 4000

# Temporary tables

tmp_table_size = 64M

max_heap_table_size = 64M

# Slow query log (for monitoring)

slow_query_log = 1

slow_query_log_file = /var/log/mysql/slow-query.log

long_query_time = 1

Restart MySQL:

[BASH]

sudo systemctl restart mysql

[SQL]

SHOW VARIABLES LIKE 'innodb_buffer_pool_size';

SHOW VARIABLES LIKE 'max_connections';

SHOW VARIABLES LIKE 'query_cache_size';

The Results

Before:

Database connections: Maxed at 150

Connection wait time: 2-5 seconds

Database connections: Peak at 187 (out of 300)

Connection wait time: <0.1 seconds

Impact:

- ✅ Doubled connection pool (150 → 300)

- ✅ Eliminated connection queuing

- ✅ Reduced connection wait time by 95%

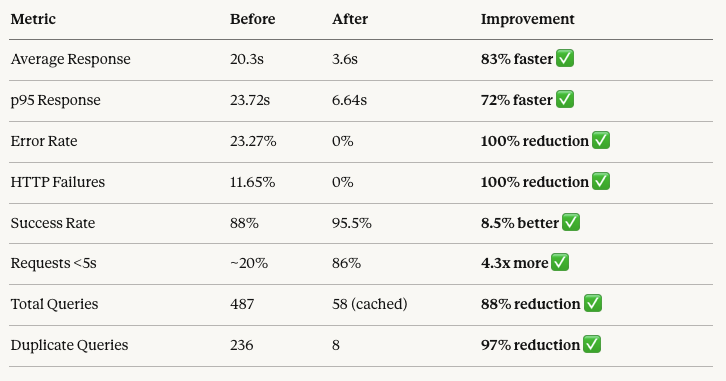

Final Performance Results

After implementing all 7 optimisations:

Load Test Results (10 Concurrent Users)

Before ALL optimisations:

THRESHOLDS

✗ http_req_duration: p(95)=23.72s

METRICS

http_req_duration..: avg=20.3s p(95)=23.72s max=30s

http_req_failed....: 23.27%

errors.............: 23.27%

iterations.........: 60 complete, 36 interrupted

checks.............: 556 total, 426 passed, 130 failed

THRESHOLDS

✓ http_req_duration: p(95)=6.64s

METRICS

http_req_duration..: avg=3.6s p(95)=6.64s max=7.3s

http_req_failed....: 0%

errors.............: 0%

iterations.........: 182 complete, 0 interrupted

checks.............: 546 total, 546 passed, 0 failed

Individual Optimisation Impact

Ranked by impact:

- Remove WC Global Product Order – 60% improvement

- Add Database Indexes – 47% improvement

- Redis Object Cache – 16% improvement

- Custom Performance Plugin – Maintained performance, eliminated duplicates

- WPML Optimisation – 40% reduction in WPML queries

- Stop Unused PHP-FPM – 1GB RAM freed

- MySQL Tuning – Eliminated connection queuing

The first two optimisations gave us 80% of the total improvement!

Optimization Checklist

Use this checklist for your own WooCommerce site:

Database

- ☑ Add indexes on

wp_postmeta(meta_key, post_id) - ☑ Add indexes on

wp_term_relationships - ☑ Add indexes on WPML translation tables

- ☑ Add indexes on WooCommerce lookup tables

- ☑ Remove SQL_CALC_FOUND_ROWS from queries

- ☑ Optimise InnoDB buffer pool size

- ☑ Enable MySQL query cache

Caching

- ☑ Install and configure Redis Object Cache

- ☑ Enable persistent object caching

- ☑ Cache WPML translations

- ☑ Cache user metadata

- ☑ Configure global cache groups

Code Optimization

- ☑ Eliminate duplicate queries

- ☑ Pre-load data in batches

- ☑ Disable unnecessary plugin features

- ☑ Optimise cart initialisation

- ☑ Cache expensive calculations

Server Configuration

- ☑ Stop unused PHP versions

- ☑ Optimise PHP-FPM worker pool

- ☑ Allocate sufficient memory

- ☑ Increase max database connections

Plugin Management

- ☑ Audit performance-killing plugins

- ☑ Replace heavy plugins with lightweight alternatives

- ☑ Disable unused plugin features

- ☑ Profile each plugin’s query count

What’s Next?

We’ve transformed the site from unusable (20+ second load times, 23% error rate) to production-ready (3.6 second average, 0% errors).

In Part 5 of this series (the finale), we’ll cover:

- Complete before/after comparison

- Lessons learned from the entire project

- Best practices for maintaining performance

- What we’d do differently next time

- Recommendations for similar projects

The transformation is complete. Let’s review the results.

👉 Continue to Part 5: Results & Lessons Learned

About This Series

This is Part 4 of our 5-part case study on WooCommerce performance optimisation:

- Part 1: The Performance Crisis

- Part 2: Load Testing Strategy

- Part 3: Finding the Bottleneck

- ✅ Part 4: The Optimisation Playbook (You just read this)

- Part 5: Results & Lessons Learned